|

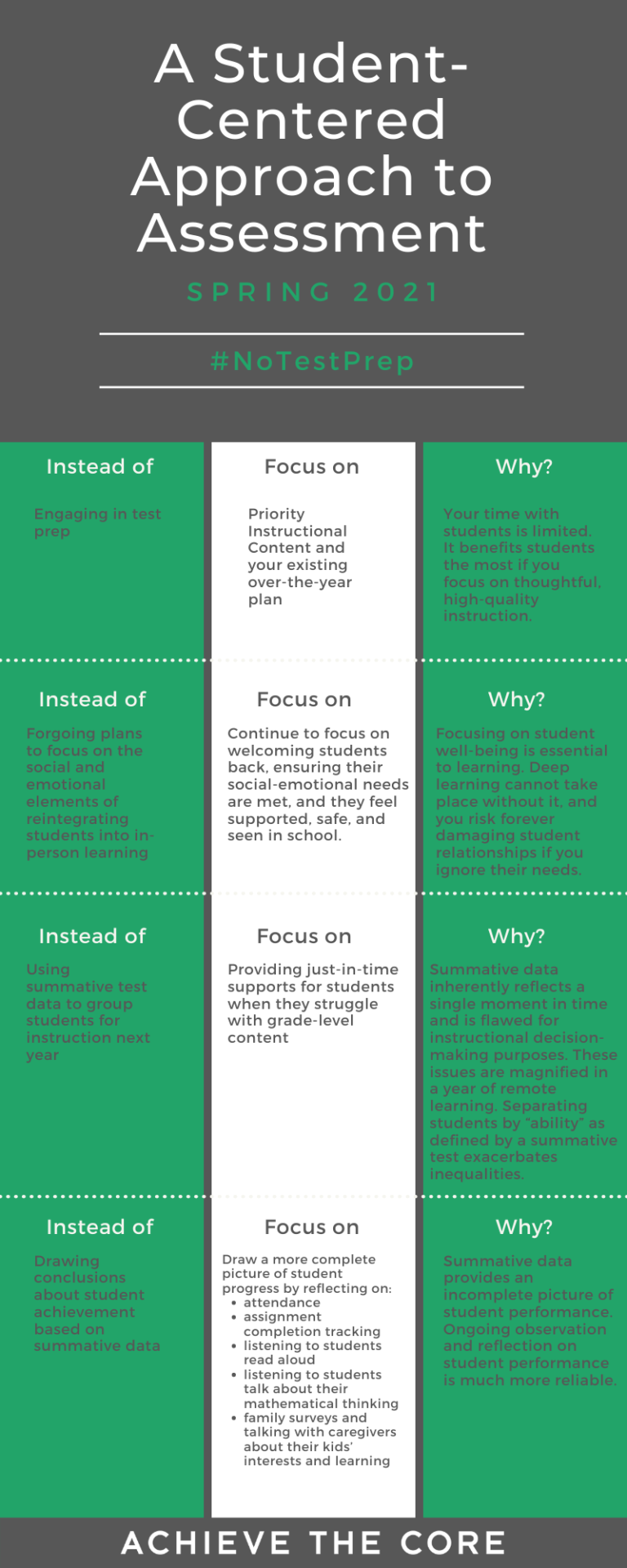

In the last few weeks I have been diving into data and assessment. This is coming off the tail end of my course’s last unit involving AI. While I was in a syncharnous zoom meeting with some of my classmates, one of them brought up the realization that AI doesn't know students like teachers know students. This classmate had made a lesson / assessment plan using AI, and had noticed that while the overall structure was good what AI couldn’t compete with was the teacher’s ability to know their students on a personal level - their growth, how and where they struggle, how and where they flourish, and what helps them achieve the most success. With this conversation in mind, I dove into exploring assessment data with a student-centered approach. While I explored, a theme that continued to emerge was how to create assessments that produce data reflective of the whole student. Assessment data can be a really useful tool, but it can also really harm students if used in the wrong way. Many times, administration can pull assessment data from a class and minimize students to mere numbers and labels. This removes all humanization, fairness, and equitable feedback from assessment, and defeats all purpose of assessment. Don’t we assess students to find out how they are doing overall? How can we tell how they are doing as a human from a few true / false or multiple choice problems? The reality is that we can’t. That said, there is a valid need for this summative assessment data on a larger scale. Even though it may seem frustrating on a small scale, this data is important to school and district growth overall. As a teacher, learning how to approach large assessments with students and what to do with data after assessments can change your relationship with assessment. This infographic, put together by Keown, et al., 2021 is a wonderful jumping point for teachers. In particular, the third row “using summative test data to group students” seemed really important to me. Assigning students labels based on assessment data can be super harmful. Imagine you missed a few days of school during the height of fraction instruction, and you have not been able to catch up on what you missed yet. Test day comes around and you still do not understand fractions, but the mathematics portion of the assessment is 50% fraction based. To no fault of your own, you don’t know how to answer any of those questions, run out of time, and end up nearly flunking the assessment. Because you placed in a certain percentile, you now have to spend an hour of your day in math intervention. This causes you to miss key time with your classmates, learning ELA content. You subsequently fall behind in ELA…. and the cycle continues.

Just as the inadequate AI lesson highlighted the importance of humanity in education, so does interpreting assessment data. Teachers know their students, and if this student’s teacher looked at their assessment results, they would be able to identify why the student scored in such a way, provide accurate feedback, and prevent labeling. References: Keown, K., Fossum, A., Li, J., Young, J. (2021, March 25). What the data can’t tell us. [Image] Achieve The Core.

0 Comments

Leave a Reply. |

Welcome!Sit back, relax, and enjoy (or don't, up to you)! Archives

August 2023

Categories |