|

Teaching and facilitating are not the same

Online teachers are teachers online. Online teachers are not facilitators, and facilitators are not teachers. Teachers have the job of teaching content, incorporating differentiation, proper pedagogical strategies, and customizing their teaching approaches to meet learner needs. A facilitator merely facilitates learning. They can be seen as more of a formality rather than a necessity, and if you take away the facilitator, the same learning could still be achieved. While learning can happen through a student-facilitator relationship, a proper online classroom is not composed of students and a facilitator, but rather students and a teacher. Teachers are professional experts in the art of teaching, and not just subject matter experts. The “lernification” phenomenon paints teachers as subject matter experts and students as autonomous learners, almost independent of one another. The threat of online learning is placing “teacher subject expertise and critical professional judgment in the background of educational practice” (Bayne, Sian, et al., 2020). This approach to ‘teaching’ places the subject matter expert at the center of the classroom, rather than focusing on “the growing expertise of the students”(Morris, S. M., & Stommel, J., 2018) and customizing the learning experience for their increased growth. Facilitators know content and can present it clearly. Facilitation of content is seen in youtube videos, mass online courses, and podcasts. Teachers, though, are master pedagogues and “their knowledge of the discipline and their knowledge of pedagogy interact” (National Academies of Sciences, Engineering, and Medicine, 2000) to produce a true learning environment. Due to their structure, online courses can easily face the threat of facilitation, and what could have been a true online classroom, can quickly turn into an online dump of knowledge. Baumgartner’s Active Learning While Physically Distancing chart presents how various communication strategies can be altered in different learning modalities. This chart is not only a wonderful resource for teachers who need to alter or enhance their learning communities, but also amplifies the ways in which online learning compares to face to face learning. It is important to note that switching from a face to face to online community does not make the active learning strategies impossible, and they can still be implemented with as much importance as in face to face learning. This chart is one of many resources available for teachers looking to ensure their online courses are taught, and not just facilitated. Quality online education is not “one size fits all” There is a lack of individualization in many online courses. They can be created largely for the general public, without consideration of unique learners. As with any course designed for a high volume of learners, there is minimal room for individualization. Massified online courses present themselves similar to massified in-person courses. While the 500-person lecture can be a convenient way for universities to deliver content to a large number of students in a timely and cost-effective manner, the education these learners receive is not going to be comparable to the education they would receive in a smaller 20-30 person classroom. The teachers in these large courses are reduced to facilitators, and students can quickly become just a number. This Pacansky-Brock infographic touches the four principles for humanizing online instruction. The third key principle is awareness. Pacansky-Brock explains that “awareness is achieved by learning about who your students are and how you can support them” (Pacansky-Brock, 2020), and in order to learn about your students, there must be some aspect of individualization and/or individualized learning. To even come close to touching a sense of individualized learning, courses must incorporate smaller recitations, which can be difficult to facilitate in mass online education. In general, “massified higher education cannot, in many instances, be experienced as intimate”(Bayne, Sian, et al., 2020). Courses developed for the general public can be used as a wonderful supplement for learners who are looking for a convenient learning experience, but without the ability to customize the course to the learner’s needs, these online courses fall short for many students. While there may be structured opportunities for engagement with peers and / or the instructor, the overall outline of mass online courses have little room for flexibility in pedagogy and engagement opportunities that many learners need in order to thrive. So, the real issue here isn’t with online education as a whole, but rather with massified learning. The unfortunate reality is that many online courses are created for public use, and in turn lack the quality of in-person individualized learning. Online courses that are provided in a smaller environment, mimicking the structure of small in-person courses can still provide the one-on-one and specialized instruction that many students seek. Educational technology raises and resolves constraints of education simultaneously In recent years, the rise of online education has proven to be a tremendous solution to many issues presented in K-12 and higher education. During the Covid-19 pandemic, the world started to embrace online learning and educational technology as a solution to many of their problems. Students were able to learn from the safety of their homes, as teachers discovered ways to transition their content from in-person to online. While the implementation of online learning and a broader educational technology toolkit resolved the immediate problem at hand, teachers uncovered new problems not present before implementing these new technologies. Teachers found that technology “has great potential to enhance student achievement and teacher learning, but only if it is used appropriately”(National Academies of Sciences, Engineering, and Medicine, 2000). In other words, educational technology comes with its own constraints, and isn’t a magical fix to all problems faced in the classroom. Lo and Hew’s (2017) research on flipped classrooms emphasized some of these constraints. As a solution, they proposed 10 guidelines to implement along with a flipped classroom in order to mitigate some of these challenges. The guidelines covered student-related, faculty-related, and operational challenges. As found in this study, there are really unique affordances of educational technology and online learning, but these tools must be looked at with a critical eye and adjusted appropriately. “Trying to make an online class function exactly like an on-ground class is a missed opportunity”(Morris, S. M., & Stommel, J., 2018). Knowing where your technology falls flat, and not trying to ignore these constraints, but rather using its affordances to your advantage can provide students with an unmatched educational experience. Educational technology is not meant to replace traditional education. When used appropriately and in combination with proper instruction, teachers and students can maximize its potential. References Baumgartner, J. (n.d.). Teaching tools: Active learning while physically distancing. Louisiana State University. Lo, C. K. & Hew, K. F. (2017). A critical review of flipped classroom challenges in K-12 education: Possible solutions and recommendations for future research. Research and Practice in Technology Enhanced Learning, 12(4), 1–22. Morris, S. M., & Stommel, J. (2018). An urgency of teachers: The work of critical digital pedagogy. Pressbooks. Bayne, S., Evans, P., Ewins, R., Knox, J., Lamb, J., Macleod, H., O'Shea, C., Ross, J., Sheail, P., & Sinclair, C. (2020). The manifesto for teaching online. MIT Press. National Academies of Sciences, Engineering, and Medicine. (2000). How People Learn: Brain, Mind, Experience, and School: Expanded Edition. Washington, DC: The National Academies Press. Pacansky-Brock, M. (2020). How to humanize your online class, version 2.0 [Infographic].

0 Comments

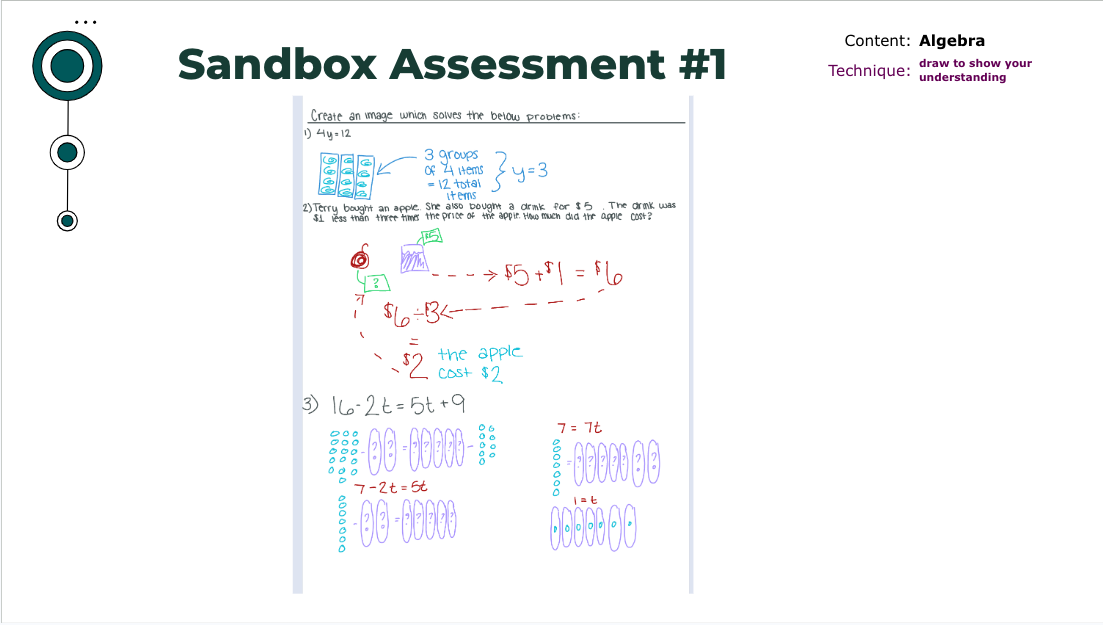

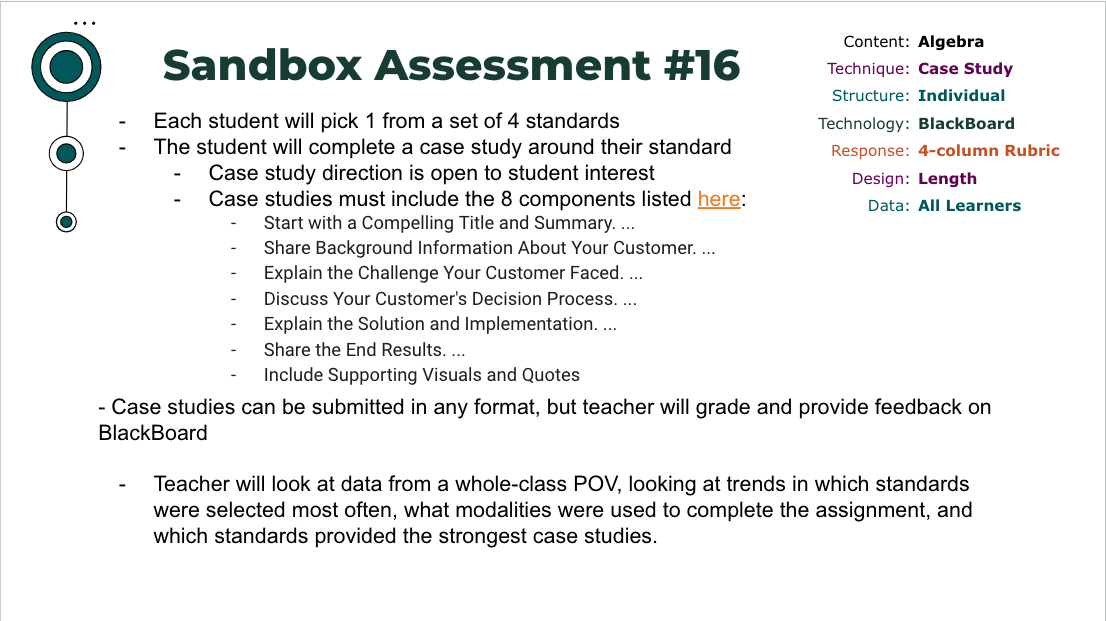

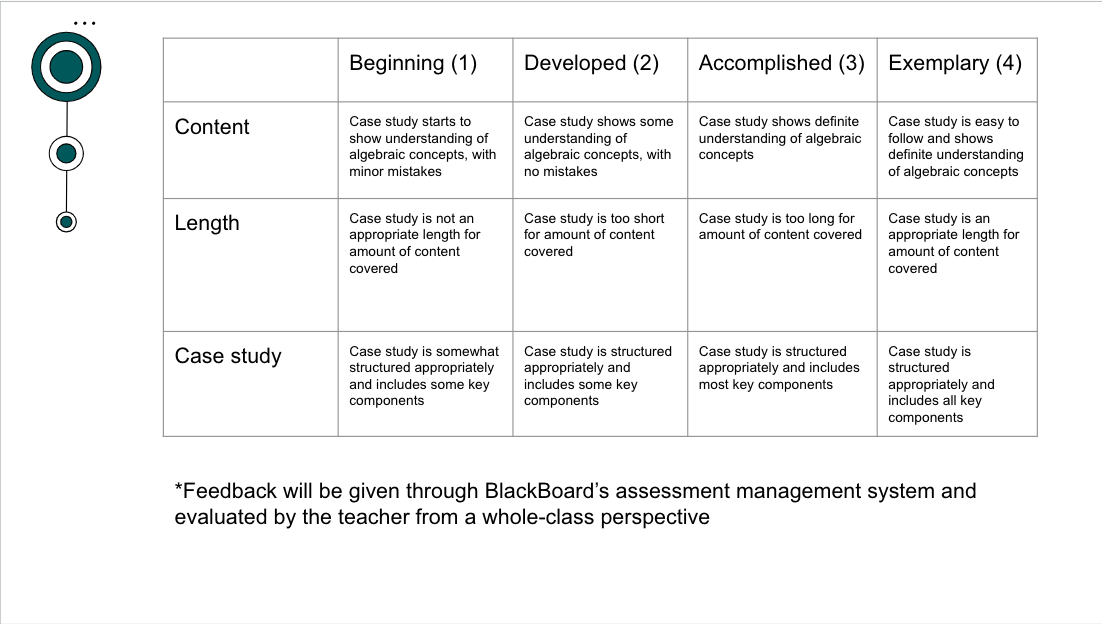

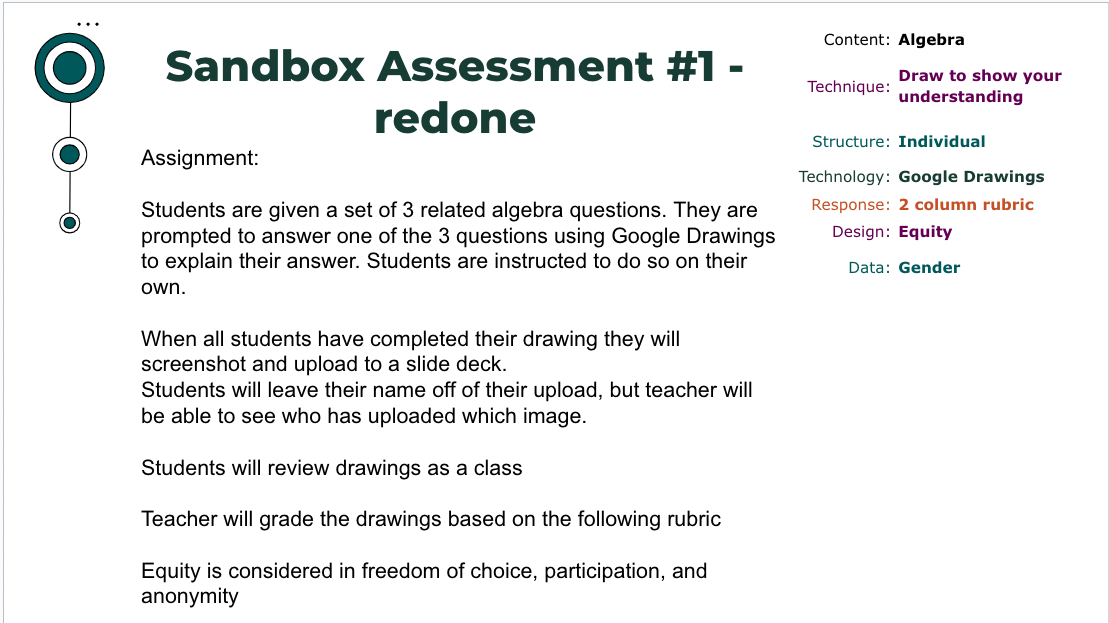

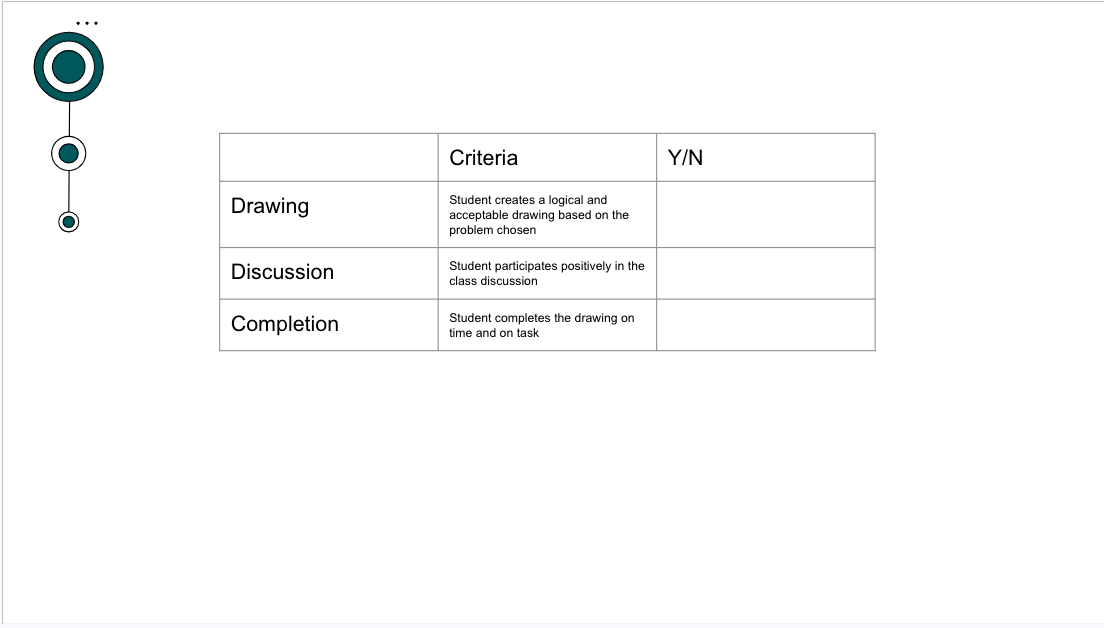

Can you identify 4 similarities between the first two images and the second two images? When I tasked myself with this exercise I came up with:

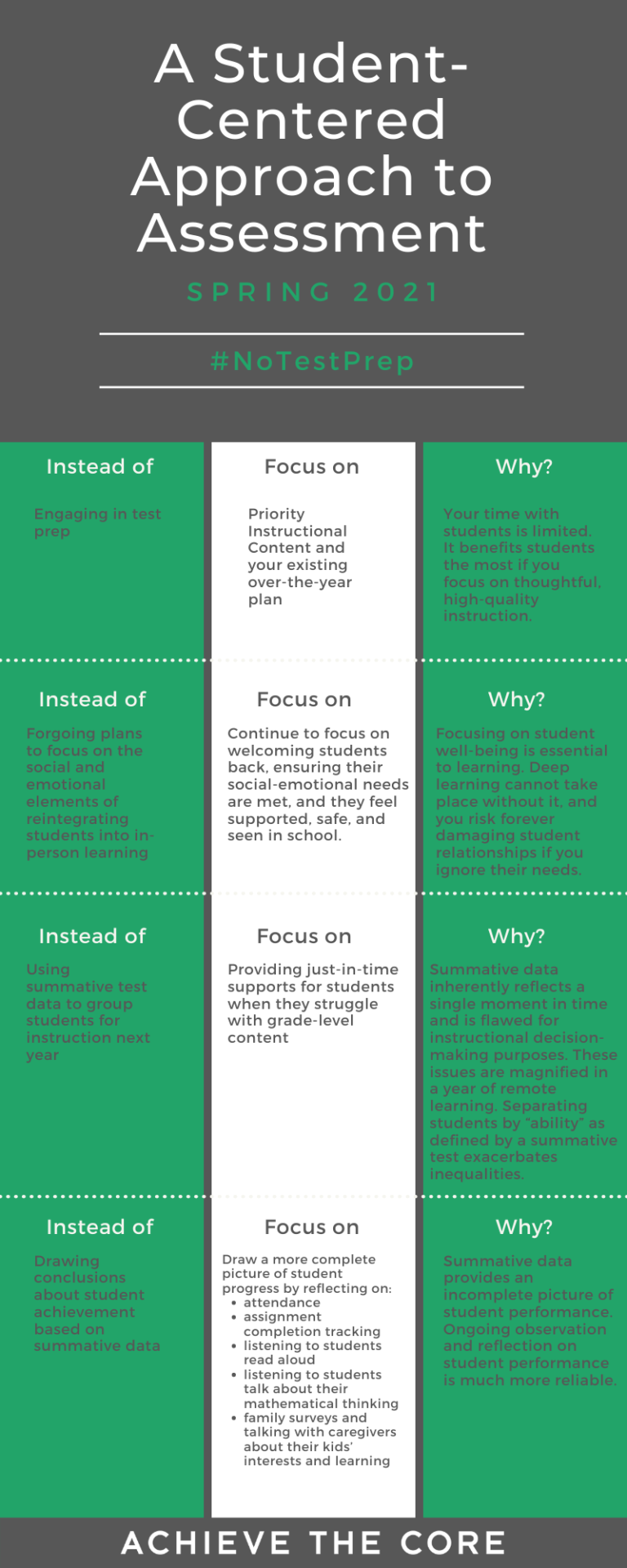

Did you come up with something along the same lines? I wouldn’t be surprised, since there are not a whole lot of similarities here. Now, if you asked me the differences between these two assessments, I could probably hand you an entire list. To begin, these assessments show the growth of an entire semester. The first sandbox assessment was created before expanding my knowledge on assessment techniques, technologies, structures, design, and data collection. I was given a technique to center an algebra assessment around, but that was the only constraint in my box. This gave me lots of room to play. What I found interesting is how much flexibility I had in my first assessment, yet how little flavor I gave it - I had the room to play but didn’t take advantage of it. Somehow, having the constraints placed on my assessment made me think outside the box (or inside the ‘sand’box in this case), and forced me to consider these different aspects of assessment. Because I had to include certain components in my assessment, I had to think about how these categories play an important part in assessment. Adding one category at a time throughout the semester allowed me to shift my attention to a new aspect of assessment each week, and figure out how I was going to highlight the importance of each. Knowing what I now know about assessment, I have redone the first sandbox assessment to see what I could come up with! Although having the constraints of each category placed on me made my assessments a little wonky, I now see the potential that my first assessment had. I included technologies, data collection techniques, clarified response format, and structure of the assessment. I would love to hear your thoughts on the improvements made! In the last few weeks I have been diving into data and assessment. This is coming off the tail end of my course’s last unit involving AI. While I was in a syncharnous zoom meeting with some of my classmates, one of them brought up the realization that AI doesn't know students like teachers know students. This classmate had made a lesson / assessment plan using AI, and had noticed that while the overall structure was good what AI couldn’t compete with was the teacher’s ability to know their students on a personal level - their growth, how and where they struggle, how and where they flourish, and what helps them achieve the most success. With this conversation in mind, I dove into exploring assessment data with a student-centered approach. While I explored, a theme that continued to emerge was how to create assessments that produce data reflective of the whole student. Assessment data can be a really useful tool, but it can also really harm students if used in the wrong way. Many times, administration can pull assessment data from a class and minimize students to mere numbers and labels. This removes all humanization, fairness, and equitable feedback from assessment, and defeats all purpose of assessment. Don’t we assess students to find out how they are doing overall? How can we tell how they are doing as a human from a few true / false or multiple choice problems? The reality is that we can’t. That said, there is a valid need for this summative assessment data on a larger scale. Even though it may seem frustrating on a small scale, this data is important to school and district growth overall. As a teacher, learning how to approach large assessments with students and what to do with data after assessments can change your relationship with assessment. This infographic, put together by Keown, et al., 2021 is a wonderful jumping point for teachers. In particular, the third row “using summative test data to group students” seemed really important to me. Assigning students labels based on assessment data can be super harmful. Imagine you missed a few days of school during the height of fraction instruction, and you have not been able to catch up on what you missed yet. Test day comes around and you still do not understand fractions, but the mathematics portion of the assessment is 50% fraction based. To no fault of your own, you don’t know how to answer any of those questions, run out of time, and end up nearly flunking the assessment. Because you placed in a certain percentile, you now have to spend an hour of your day in math intervention. This causes you to miss key time with your classmates, learning ELA content. You subsequently fall behind in ELA…. and the cycle continues.

Just as the inadequate AI lesson highlighted the importance of humanity in education, so does interpreting assessment data. Teachers know their students, and if this student’s teacher looked at their assessment results, they would be able to identify why the student scored in such a way, provide accurate feedback, and prevent labeling. References: Keown, K., Fossum, A., Li, J., Young, J. (2021, March 25). What the data can’t tell us. [Image] Achieve The Core. AI is inevitably going to bleed into the education world (if it hasn’t already), and if teachers, students, and all those in the education sector don’t learn to make it our friend, it has the potential to become our enemy. In my professional life we have started the conversations around how we can use AI for our benefit and jump ahead of the inevitable. One way my company is looking at using AI is by making processes easier and more streamlined on the operations end. This would be our entry into the world of AI in work life before diving into how it could impact our product. Because AI is still so new, it will be a long time before our company places any product with AI influence in front of a teacher, let alone a student. Teachers may not have this kind of luxury though, and will have to adapt sooner rather than later to ensure students are educated on how to use this tool properly and ethically. One way to introduce AI to students could be modeling how to use AI as “Google” rather than a super smart classmate that students can steal homework from. Teachers can model this by asking AI for ideas on how to assess students on their most recent geometry unit which covers X, Y, and Z topics. Students can vote on whether they like the AI suggestion, or would like to search for another. The same process can be done, asking AI how the teacher should provide feedback, grade assessments, and even how students could use its assistance to complete the assignment. Monash University (n.d.) suggests that students provide the exact prompt that was entered into the system, and any and all changes that were made. Monash University’s Learning and Teaching: Teach HQ site also has a chart of student tasks and the ability of AI to complete them. Tasks like closed / short answer questions are quite easy for AI, whereas tasks such as interviews and recordings are much more difficult. While this list is a great reference point for the ability of AI, I would caution the reliance on it’s accuracy as AI continues to evolve and grow. Any way you sprint it, the most important factor when introducing AI to students like this is to make sure that the student maintains a critical eye while interacting with the technology and checks the validity of all AI outputs. This video shows what can happen when you leave your translation in the trust of Google Translate, which is a great (and funny) way to emphasize the importance of critical thinking with technology, and how vital human knowledge is. For now, teachers should start learning how to make AI their friend while administration and larger corporations tackle the long term impacts of AI on education. References:

Learning and Teaching: Teach HQ. (n.d.). Generative AI and assessment Monash Univeristy. “Let It Go” From Frozen According to Google Translate. (2014). YouTube. https://www.youtube.com/watch?v=2bVAoVlFYf0 This past week I was in Boston, Massachusetts attending my summer team meeting. While the theme of the meeting was “incorporating DEIB practices into our daily work”, I walked away with a slightly different message.

This meeting was the first time that I have met any of my coworkers in person. Although I have been working with this team for over a year now, I have only ever met any of my team members over Zoom. While I have started to form relationships with many of them, there was most definitely some aspect of those connections that was missing. What I now realize is that the element that was missing was the “humanizing” element. As I return from my time in person with my team, I can’t help but feel empathy for those who may never fill this gap in their connections, especially students in online courses. Having been an in person, hybrid, and virtual student, I know the effects of each modality and the importance of humanizing tools for instruction to fill in gaps that come with the online and hybrid modalities. The good news is that there are guides to make humanizing instructional tools easier. Pacansky-Brock’s (2020) eight humanizing elements lay out eight different tools that you can include in your online course to ensure that your students are more than just “names on screens”. One tool that I plan to implement in my workplace instructional tools is bumper videos. These videos are short checkpoints within course content that clarify sticking points and differentiate learning. How I am going to implement this tool: My company uses tools called “Job Aids”. These job aids are documents that live in a SharePoint site, which guide you through processes. This is a broad definition, because they are used for a broad range of tasks. For almost any process I may need to learn, I am able to find a Job Aid in our company SharePoint site. This makes for a convenient learning experience, allowing me to reference instructions whenever and however often I need to. While these documents are great for this purpose, they lack humanization. By implementing bumper videos, not only are you adding a human element to the tools, but also incorporating differentiation, check points, and providing multiple modalities for multiple learning needs. These bumper videos can be placed throughout the document, screen sharing an example of a difficult aspect of the process, or included at the end of the document outlining the entire process and focusing on any common sticking points. To view how I added bumper videos to a Job Aid, click here. How you can implement this tool: In a K-12 online classroom, these bumper videos can be placed in the middle of a module. If there is a key term you want students to remember you can create a short 15-20 second video of yourself demonstrating that term, connecting that term to your life or their lives, or spelling and defining the term using your own voice. By simply placing a voice with words, human connection can be emphasized and connect students to content. In an online learning environment outside of the K-12 world, bumper videos can be used in the same way. Can you make a connection between current content and past or future content? Can you place a video in the middle of your module encouraging students to silently reflect for 2 minutes on the content learned previously? For more assistance of humanizing your online courses and / or how to create your own bumper videos, check out the content below: Michelle Pacansky-Brock’s “Bumper Videos and Microlectures” George Mason University: “Humanizing Your Online Course: Using Brief “Imperfect” Videos” California Community Colleges “How and why to humanize your online course” References: Pacansky-Brock, M. (2020). How to humanize your online class, version 2.0 [Infographic]. https://brocansky.com/humanizing/infographic2 Let me paint a scene for you: “Line up outside - no talking. When you come inside, put your phone in a basket at the front of the room. Sit down at your seat, not too close to anyone else. A Scantron and a test booklet will be passed out to you shortly - don’t touch them until I say so. You have exactly 50 minutes to complete your 60 question exam. No talking, keep your eyes on your own desk, raise your hand if you have a question. If you must go to the bathroom, you will leave your Scantron and test booklet with me. Tests will be graded by the end of the day and your final course grade will be riding on this exam.”

Does this sound familiar? This scene was based on my final chemistry exam day, but I bet you were imagining a personal experience of your own. When asked to think about my worst assessment, memories of long nights studying, mornings filled with stress, anxiety, and anticipation, and mere moments before high school exams came flooding back into mind. As I was picking through the pile of horrible assessments, trying to give one the title of “Worst Assessment Ever”, I found that these high school exams all had one thing in common: structure. What makes an assessment bad isn’t the teacher, the content, or the preparation. Bad assessments sprout from bad structures and the standard high school exams are notorious for the identical bad structure. The exams lack humanity, personality, individuality, and character. They are dry, unmemorable, and many times downright frustrating. The Universal Design for Learning Guidelines outline three standards for learning: providing multiple means of engagement, representation, and action and expression (CAST, 2018). When utilized correctly, the goals of these guidelines is to produce learners who are purposeful and motivated, resourceful and knowledgeable, and strategic and goal-directed. I can confidently state that my final chemistry exam did not produce any of the above in myself as a learner. So, what did this exam (and the hundreds like it) accomplish? The teacher was able to tell how well her students could memorize facts, test each student in identical formats, and eliminate any potential bias in her grading. But this exam did not provide differentiation in enegagement, representation, or action and expression and completely missed the mark on UDL Guidelines. My class could have been assessed in limitless other formats (cumulative project, recreate a lesson, fishbowl discussion, real-world applications, etc) which would have more potential to meet the UDL Guidelines, but this teacher defaulted to the same dry exam format. As I have grown in my understanding of the pressures and constraints teachers are placed under, I have learned to give my teachers more grace when critiquing their courses. I want to emphasize that this teacher was a phenomenal professional, and I had many positive learning experiences in her classroom. My previous critiques are focused on the assessment as a product, and not on the teacher as a person. In certain cases, the standard “Scantron and test booklet” exam may be the best possible option for assessment, but even within these rote exams it is important to give students the best possible opportunity for success and learning. Incorporating the aforementioned UDL Guidelines provides this option for students and student success. References: Center for Applied Special Technology (CAST). (n.d.). UDL Guidelines. CAST. https://udlguidelines.cast.org/ The phrase “high stakes assessment” can bring up a range of emotions for many learners. Most people have had a plethora of bad experiences with tests, or assessments, throughout their education. But what about the assessments that are done right? These instances should hold just as much importance as the assessments that are done wrong.

One instance of a really good assessment that sticks out to me is a project I completed in my final year of my undergraduate education. This project was a “Microteaching Assignment” which assessed three other peers and I on our ability to research and understand content, create an equitable and accessible learning experience, and incorporate proper pedagogical strategies. Looking back on this project, although I did not have the precise name for it, the TPACK framework (Mishra, & Koehler, 2006) was seamlessly present throughout the lesson. My peers and I combined our technological, pedagogical, and content knowledge to create a robust lesson for our peers. So, what made this assessment so good? To begin, it was assessed qualitatively and took into account peer input, teacher feedback, and audience experience. The format of the assessment had a general outline and key components we had to include, but it could be molded into a final product that worked for each individual group. This placed the emphasis on the learning experience rather than the checklist of test items. The assessment was high stakes, but low pressure which allowed my peers and I to present to the best of our ability as well. The way this assessment was so open ended fought the “Unequal by Design” phenomenon as described by Au (2008). I want to emphasize a few points here that are critical in understanding why and how this assessment was facilitated.

My Lesson Plan My Visual Aids References: Au, W. (2008). Unequal by design: High-stakes testing and the standardization of inequality. Routledge. Mishra, P., & Koehler, M. J. (2006). Technological Pedagogical Content Knowledge: A framework for teacher knowledge. Teachers College Record, 108(6), 1017-1054. Manifesto for Online Learning

Teaching and facilitating are not the same Online teachers are teachers online. Online teachers are not facilitators, and facilitators are not teachers. Teachers have the job of teaching content, incorporating differentiation, proper pedagogical strategies, and customizing their teaching approaches to meet learner needs. A facilitator merely facilitates learning. They can be seen as more of a formality rather than a necessity, and if you take away the facilitator, the same learning could still be achieved. While learning can happen through a student-facilitator relationship, a proper online classroom is not composed of students and a facilitator, but rather students and a teacher. Teachers are professional experts in the art of teaching, and not just subject matter experts. The “lernification” phenomenon paints teachers as subject matter experts and students as autonomous learners, almost independent of one another. The threat of online learning is placing “teacher subject expertise and critical professional judgment in the background of educational practice” (Bayne, Sian, et al., 2020). This approach to ‘teaching’ places the subject matter expert at the center of the classroom, rather than focusing on “the growing expertise of the students”(Morris, S. M., & Stommel, J., 2018) and customizing the learning experience for their increased growth. Facilitators know content and can present it clearly. Facilitation of content is seen in youtube videos, mass online courses, and podcasts. Teachers, though, are master pedagogues and “their knowledge of the discipline and their knowledge of pedagogy interact” (National Academies of Sciences, Engineering, and Medicine, 2000) to produce a true learning environment. Due to their structure, online courses can easily face the threat of facilitation, and what could have been a true online classroom, can quickly turn into an online dump of knowledge. Quality online education is not “one size fits all” There is a lack of individualization in many online courses. They can be created largely for the general public, without consideration of unique learners. As with any course designed for a high volume of learners, there is minimal room for individualization. Massified online courses present themselves similar to massified in-person courses. While the 500-person lecture can be a convenient way for universities to deliver content to a large number of students in a timely and cost-effective manner, the education these learners receive is not going to be comparable to the education they would receive in a smaller 20-30 person classroom. The teachers in these large courses are reduced to facilitators, and students can quickly become just a number. To even come close to touching a sense of individualized learning, courses must incorporate smaller recitations, which can be difficult to facilitate in mass online education. In general, “massified higher education cannot, in many instances, be experienced as intimate”(Bayne, Sian, et al., 2020). Courses developed for the general public can be used as a wonderful supplement for learners who are looking for a convenient learning experience, but without the ability to customize the course to the learner’s needs, these online courses fall short for many students. While there may be structured opportunities for engagement with peers and / or the instructor, the overall outline of mass online courses have little room for flexibility in pedagogy and engagement opportunities that many learners need in order to thrive. So, the real issue here isn’t with online education as a whole, but rather with massified learning. The unfortunate reality is that many online courses are created for public use, and in turn lack the quality of in-person individualized learning. Online courses that are provided in a smaller environment, mimicking the structure of small in-person courses can still provide the one-on-one and specialized instruction that many students seek. Educational technology raises and resolves constraints of education simultaneously In recent years, the rise of online education has proven to be a tremendous solution to many issues presented in K-12 and higher education. During the Covid-19 pandemic, the world started to embrace online learning and educational technology as a solution to many of their problems. Students were able to learn from the safety of their homes, as teachers discovered ways to transition their content from in-person, to online. While the implementation of online learning and a broader educational technology toolkit resolved the immediate problem at hand, teachers uncovered new problems not present before implementing these new technologies. Teachers found that technology “has great potential to enhance student achievement and teacher learning, but only if it is used appropriately”(National Academies of Sciences, Engineering, and Medicine, 2000). In other words, educational technology comes with its own constraints, and isn’t a magical fix to all problems faced in the classroom. There are really unique affordances of educational technology and online learning, but these tools must be looked at with a critical eye. “Trying to make an online class function exactly like an on-ground class is a missed opportunity”(Morris, S. M., & Stommel, J., 2018). Knowing where your technology falls flat, and not trying to ignore these constraints, but rather using its affordances to your advantage can provide students with an unmatched educational experience. Educational technology is not meant to replace traditional education. When used appropriately and in combination with proper instruction, teachers and students can maximize its potential. References Morris, S. M., & Stommel, J. (2018). An urgency of teachers: The work of critical digital pedagogy. Pressbooks. Bayne, S., Evans, P., Ewins, R., Knox, J., Lamb, J., Macleod, H., O'Shea, C., Ross, J., Sheail, P., & Sinclair, C. (2020). The manifesto for teaching online. MIT Press. National Academies of Sciences, Engineering, and Medicine. (2000). How People Learn: Brain, Mind, Experience, and School: Expanded Edition. Washington, DC: The National Academies Press. This semester in my CEP assessment course, I am going to be exploring assessment. Last semester, I created a final Theory of Learning, outlining my theories of what learning is, where it takes place, and how it happens. In this blog post I am going to explain an (extremely brief) Theory of Assessment, outlining personal theories similar to my Theory of Learning.

What is assessment? I believe that assessment can be defined as any tool or practice used to gauge knowledge and understanding. Assessment comes in all shapes and sizes, ranging from 30 second exit tickets to 8 hour graduate school entrance exams. Some assessments are formative, others are summative. Assessments can be creative or rigid, high-stakes or low-stakes, collaborative or independent, and most importantly a learning opportunity or not. With a wide range of assessments at teachers’ fingertips, there is an endless possibility for differentiation in daily, weekly, and yearly assessments. Many students have a difficult relationship with assessment at the high-stakes level. Society places a large value on assessment performance, which can in turn cause assessment anxiety and/or uneasy relationships with assessment. Since I believe the goal of assessment is to gauge learning, this can be extremely problematic when students are too nervous to be able to accurately communicate their knowledge. Because of this disconnect, I strongly believe that assessments should be flexible and individualized. Shepard (2000) argued that “the measurement approach to classroom assessment … presents a barrier to the implementation of more constructivist approaches to instruction” (p. 4). This summarizes my view of current popular assessment strategies. While implementing constructivist learning environments is beneficial for students, if that is coupled with rigid classroom assessments, a large disconnect is present. This disconnect can lead to the mismatch in knowledge and performance as well. With this in mind, my view is not anti-assessment but rather pro-assessment-differentiation. We know that students learn best in their individual optimized environments. This needs to be applied to assessment as well, and it is generally not. Just like we know that some students prefer to learn in a hands-on active environment, and others prefer a more structured and direct instruction environment, some students may feel less pressure to have their knowledge assessed through creative and/or collaborative projects whereas others may feel more pressure when faced with creative projects. Assessment is an important aspect of learning, but I firmly believe that assessment should be studied and treated in the same manner as we treat instruction. Assessment is a critical tool and part of the learning process. Without assessment teachers won’t know if their students are learning, and similarly students won’t be able to push their knowledge to the next level. But assessment doesn’t have to be scary. I think there is a lot of work that needs to be done to reform the assessment standards in our society and breakdown some of the generalized beliefs around assessment. A pro-differentiation view of assessment is possible with support, research, and work. References: Shepard, L. A. (2000). The role of assessment in a learning culture. Educational Researcher, 29(7), 4-14. Alberto G. (June, 2011). Exam [Photograph]. Flickr. https://www.flickr.com/photos/4311409835 This week, I explored some new (and old) technologies used to support algebra learning. I have been sharing a lot of what I have learned in my CEP 805 course through these blog posts, but I realized I rarely discuss my background and where my mind is before learning all of the wonderful content in this course. So, before I dive into what I learned, I first want to outline the mindset that I entered this week with.

Algebra is one of my favorite content areas in math for a multitude of reasons. I have had outstanding algebra teachers in my life who have had robust Mathematical Knowledge for Teaching (Hill & Ball, 2009), combining each of the six areas of MKT perfectly to thoroughly teach their students. These teachers made me fall in love with math and lit a spark in me which has grown larger the further I explore the content. Because of my relationship with Algebra content, I have formed specific relationships with the tools I use in algebra learning. I was excited entering this week’s technology exploration because I have always found great use of algebra technologies. While watching a peer’s reflection on the technology “Wolfram Alpha”, I realized that I have a different relationship with certain technologies when they are used in algebra settings than when they are used with other content areas (ex. calculus). With this specific app, I always held a caution around using it with students because I only ever used it in a calculus setting, after spending hours attempting problems to no avail, and resorting to this app to find the right answer. Because I had a very specific connection between this app and my calculus experience, I always assumed other students would use it in the same way and wanted to avoid its use entirely. The narrative changed when I looked at a very similar app, PhotoMath. I had used PhotoMath in my algebra courses, and had positive relationships with it. Even though this app is structured the same as Wolfram Alpha, I used the app to assist in my learning, rather than merely get the right answer for me. It was used in low-stakes environments, with no stress or expectations. My teacher allowed the use of PhotoMath in introductory lessons. We would spend 5-10 minutes attempting to solve a problem before learning the content. We would then spend 5 minutes with the PhotoMath answer, getting acquainted with the process for ourselves, and then would dive into the content and learn what each of the steps in PhotoMath told us. In this way, we used the same type of technology in a way that allowed us to form a positive relationship with it and not abuse it. Looking back on this use, I can see where my teacher had a really developed Knowledge of Content and Students (Hill&Ball, 2009), knowing how we learn, what tools were out there, how we might abuse them, and setting the norm for how they should be used before we have the chance to abuse them. Moving forward with my learning, I want to keep these realizations in mind and address any other biases I may have towards certain technologies before I explore them further. Resources: Hill, H., & Ball, D. L. (2009). The curious - and crucial - case of Mathematical Knowledge for Teaching. Phi Delta Kappan, 91(2), 68–71. |

Welcome!Sit back, relax, and enjoy (or don't, up to you)! Archives

August 2023

Categories |